By now, we are all aware of the potential of artificial intelligence to reshape industries. However, this means that businesses now face the critical challenge of harnessing its power innovatively while upholding ethical standards, managing budget constraints, and ensuring regulatory compliance. Adopting a responsible AI framework is not a barrier to progress, but a strategic imperative that enhances business value, builds trust, and mitigates long-term risks.

The transformative potential of artificial intelligence has created undeniable momentum for adoption. From automating routine tasks to unlocking unprecedented insights from complex data, AI technologies offer competitive advantages that few organisations can afford to ignore. Yet this rapid advancement brings with it profound ethical and societal concerns that responsible businesses must address.

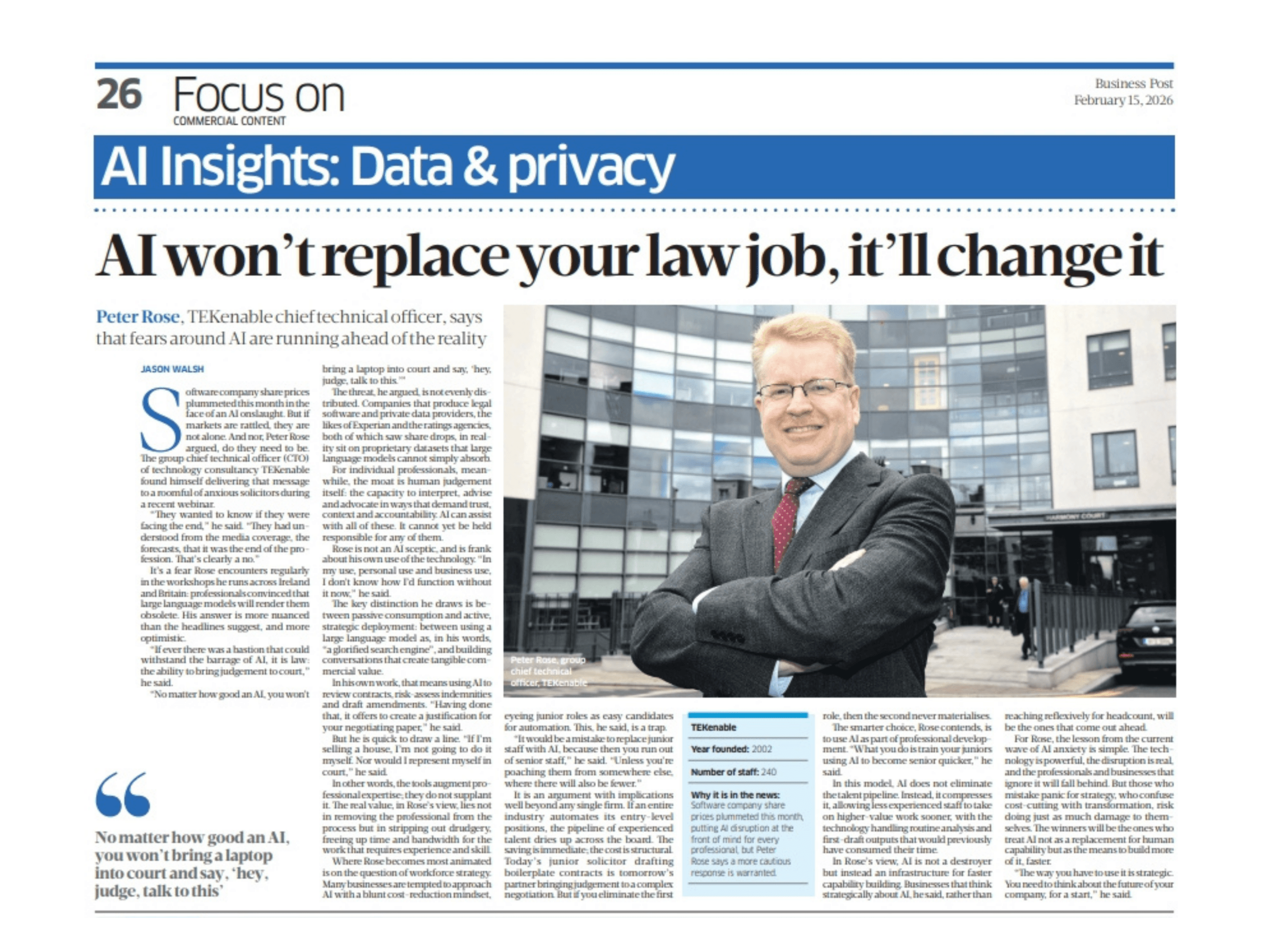

Issues such as algorithmic bias, lack of transparency in decision-making processes, potential job displacement, privacy infringements, and the risk of misuse have all emerged as significant considerations. For business leaders, this creates a core dilemma: how to innovate with AI at pace without sacrificing ethical principles, exceeding budget limitations, or falling foul of increasingly stringent regulations.

The true cost of neglecting AI ethics

The financial implications of ethical failures in AI deployment extend far beyond the immediate development expenses. When organisations treat ethics as an afterthought, they expose themselves to substantial financial, reputational, and legal consequences that can dramatically outweigh the initial cost of implementing responsible practices.

The damage caused by biased algorithmic outputs can rapidly erode customer trust, particularly among demographics who may already feel marginalised. Non-compliance with regulations such as the EU’s General Data Protection Regulation (GDPR) or Artificial Intelligence Act (EU AI Act) can result in significant financial penalties – up to 6% of global annual turnover under the latter. Perhaps most costly of all is the need to rethink or entirely discontinue AI systems that prove ethically problematic after deployment.

Consider the outcome if a financial services provider invested in an AI-driven loan approval system, only to discover significant biases in its decisions after implementation. The cost of rebuilding the system, potentially compensating affected customers, and repairing reputational damage would likely far exceed what would have been spent on ethical considerations during initial development.

Clearly this is not how businesses should adopt AI, and it is not how they are doing so. After all, businesses are well aware of the risks and regulatory environment. However, risk awareness need not ground projects to a halt.

A foundation for trust

Establishing a robust ethical framework requires commitment to several core principles:

Fairness and non-discrimination

AI systems should be designed and trained to avoid perpetuating or amplifying biases. This involves careful scrutiny of training data, regular testing for discriminatory outcomes, and implementing corrective measures when biases are identified. Techniques such as fairness constraints in algorithms and diverse training datasets can significantly improve equity in AI outputs.

Transparency and explainability

The “black box” problem – where even developers cannot fully explain how AI systems reach specific conclusions – undermines accountability and trust. Explainable AI approaches prioritise models and methods that can be meaningfully understood by relevant stakeholders, whether they are developers, regulators, or end-users.

Accountability and governance

Clear lines of responsibility must be established for AI systems and their impacts. This includes designated oversight roles, documented decision-making processes, and explicit policies for addressing unintended consequences or harms.

Privacy protection

AI systems often rely on vast amounts of data, making robust data protection essential. Privacy-enhancing technologies, data minimisation principles, and transparent data usage policies help ensure that AI advancement doesn’t come at the expense of individual privacy rights.

Security and reliability

AI applications must be secure against manipulation and consistently perform as intended. This requires rigorous testing across diverse scenarios, regular security audits, and built-in safeguards against adversarial attacks or data poisoning.

Human oversight

Even the most sophisticated AI systems benefit from appropriate human supervision. Determining where and how humans should remain “in the loop” ensures that AI augments rather than replaces human judgment, particularly in high-stakes decisions.

Practical frameworks for ethical AI governance that foster innovation

Moving beyond theoretical principles to actionable implementation requires structured approaches that balance ethical considerations with practical business realities.

Organisations leading in responsible AI typically establish dedicated governance bodies that bring together diverse perspectives – technical experts, legal advisors, business strategists, and ethics specialists. Rather than serving as innovation gatekeepers, these boards can provide valuable guidance that improves both the ethical quality and business value of AI initiatives.

Bias audits and mitigation tools

A growing ecosystem of technical tools enables organisations to detect and address biases throughout the AI lifecycle. From pre-processing techniques that balance training data to post-deployment monitoring systems that flag unexpected patterns, these resources make bias mitigation increasingly feasible within standard development workflows.

Data governance frameworks

Responsible AI requires exceptional attention to data quality, provenance, and representation. Establishing clear protocols for data collection, labelling, and usage – specifically tailored to the demands of AI systems – creates a foundation for both ethical and effective outcomes.

Structured risk assessment

Frameworks such as the NIST AI Risk Management Framework provide systematic approaches for identifying, assessing, and mitigating AI-specific risks without impeding innovation. These structured methodologies help organisations anticipate potential issues early, when they are less costly to address.

“Red teaming” exercises

Borrowing from cybersecurity practices, this approach involves dedicated teams attempting to identify ethical vulnerabilities or unintended consequences in AI systems before deployment. This proactive testing reveals blind spots that developers might otherwise miss.

Iterative development with ethical feedback loops

Rather than treating ethics as a final checkpoint, leading organisations integrate ethical considerations throughout agile development cycles. This allows for continuous improvement in both performance and responsibility.

“Ethics by design” approach

The perception that ethical AI necessarily incurs prohibitive costs often stems from approaching ethics as an add-on rather than an integral element of development. However, integrating ethical considerations from the outset of AI projects is demonstrably more cost-effective than remediation after problems emerge. This approach aligns ethical analysis with existing planning and development processes, minimising additional resource requirements.

Prioritisation based on risk and impact

Not all AI applications carry equal ethical risk. – a reality that can be seen in regulatory frameworks such as the EU AI Act. By categorising projects according to their potential impact on individuals and society, organisations can allocate ethical resources strategically, focusing intensive review on high-risk applications while streamlining processes for lower-risk use cases.

The tangible business value of responsible AI

Far from being merely a cost centre or compliance necessity, responsible AI delivers concrete commercial benefits that strengthen the business case for ethical practices.

AI, done right, can result in enhanced customer trust and brand differentiation, for instance. As AI systems increasingly influence consequential decisions, organisations demonstrating transparent and fair practices gain significant trust advantages. In consumer-facing industries, ethical commitments can become powerful differentiators in crowded marketplaces.

Likewise, taking an ethics-first approach to the technology means reduced long-term risks and liabilities. Notably, the EU AI Act, along with sector-specific regulations, introduces substantial penalties for non-compliant systems. Organisations with robust ethical frameworks mitigate these risks while avoiding costs associated with withdrawing problematic applications or defending against litigation.

Ethical AI practices such as comprehensive testing, bias mitigation, and transparent design frequently improve technical performance as well. Models developed with diverse, representative data typically generalise better across user populations, while explainable approaches facilitate more effective debugging and refinement.

Navigating the evolving global AI regulatory landscape

As regulatory regimes evolve globally, organisations with established ethical practices adapt more seamlessly to new requirements. The EU AI Act’s risk-based approach, for instance, imposes varying obligations based on an application’s potential impact—organisations already conducting ethical risk assessments will find compliance substantially less burdensome.

However, the regulatory environment for AI continues to develop rapidly, with significant implications for businesses operating across borders.

For instance, the AI Act establishes a comprehensive framework categorising AI applications by risk level, with corresponding requirements for high-risk systems including risk assessments, human oversight, and transparency measures. The UK’s approach emphasises voluntary measures and sector-specific guidance, while the US pursues a patchwork of federal agency rules and state-level legislation.

In this dynamic environment, organisations benefit from adaptive governance structures that can respond to regulatory developments without disrupting innovation cycles. By establishing principles-based frameworks aligned with emerging global standards, businesses can build resilience against regulatory fragmentation.

Conclusion: the strategic imperative for sustainable innovation

Ethical AI implementation is not simply a trade-off against innovation or cost-effectiveness. Rather, it represents a strategic approach that enhances the sustainability, trustworthiness – and the ultimate value of AI investments.

The journey toward responsible AI requires integrating people, processes, and technology in service of ethical principles – not as constraints on innovation, but as guidelines that channel technological advancement toward sustainable competitive advantage. By addressing ethical considerations within practical frameworks that acknowledge business realities, organisations can harness AI’s transformative potential while building the trust essential for long-term success.

As AI capabilities continue to advance, the organisations that thrive will be those that recognise responsible implementation as not merely a compliance exercise, but a fundamental business imperative that creates enduring value for customers, employees, and shareholders alike.

Is your organisation prepared to innovate responsibly with AI? Contact TEKenable for expert guidance on establishing an ethical AI framework that aligns with your business goals, budget, and the evolving regulatory environment to build a future where AI benefits everyone.

Ethical AI Adoption FAQs:

What does “Ethical AI Adoption” mean?

Ethical AI adoption refers to the responsible integration of artificial intelligence technologies into business operations, ensuring that AI systems align with human values, protect rights, and avoid harm. This includes transparency, fairness, accountability, and respect for privacy and autonomy.

Why is ethical AI important for businesses?

Unethical AI can lead to biased decisions, privacy violations, reputational damage, and legal consequences. Ethical AI builds trust with customers and stakeholders, ensures compliance with regulations like the EU AI Act and GDPR, and supports sustainable innovation.

What are the key risks of unethical AI use?

- Bias and discrimination in hiring, lending, or profiling.

- Lack of transparency in decision-making.

- Data misuse and privacy breaches.

- Job displacement and workforce disruption.

- Algorithmic manipulation and lack of accountability.

How can organisations ensure ethical AI deployment?

- Conduct AI impact assessments (e.g., TEKenable’s Responsible AI framework).

- Implement human oversight and avoid full automation in critical decisions.

- Use diverse training data and monitor for bias.

- Ensure explainability and transparency in AI systems.

- Align AI use with corporate values and legal standards.

What role does regulation play in ethical AI?

Regulations like the EU AI Act, GDPR, and UNESCO’s Recommendation on the Ethics of AI provide frameworks to guide responsible AI use. These laws aim to protect human rights, ensure fairness, and prevent misuse of AI technologies.

How can businesses prepare for ethical AI adoption?

- Start with an AI Readiness Assessment to evaluate current systems and data quality.

- Educate teams through AI upskilling workshops and ethical training.

- Develop a clear business case and secure executive buy-in.

- Address cultural and process changes to support ethical integration.

Can AI be ethical by design?

Yes, but it requires intentional design choices. AI should be built to augment human decision-making, not replace it, especially in areas affecting health, rights, or dignity. Ethical design includes safeguards, fallback mechanisms, and continuous monitoring.