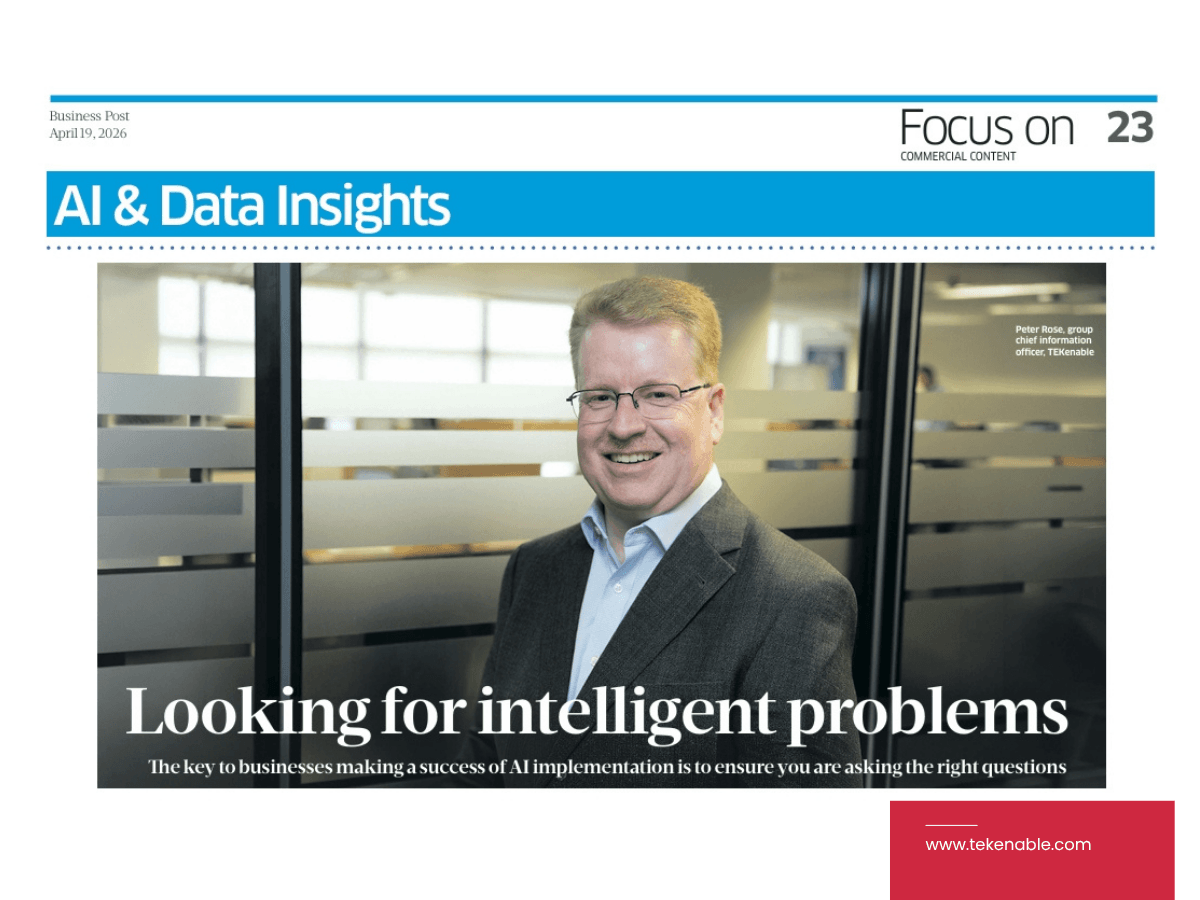

It’s not a secret that businesses are thinking about artificial intelligence and, as news reports remind us, many are implementing it. However, what they are getting in return varies, said Peter Rose, group chief information officer at TEKenable.

Indeed, there is a particular kind of boardroom conversation happening right now in organisations across every sector: someone has decided they need to be doing something with AI and so a team is assembled and tools are evaluated. After this, a use case is found and a proof of concept is built only for, six months later, everything to go silent.

Rose has seen both ends of the spectrum: the implementations that transform organisations and the ones that consume budget and enthusiasm in roughly equal measure.

“A solution looking for a problem,” he said, “tends to lead to fractional business cases.”

In other words, the kind of AI application that shaves a few minutes off someone’s working day but delivers no meaningful change to the organisation as a whole.

Unless, that is, the organisation is enormous. “If you have 10,000 people each saving five to ten minutes on a task, that makes a difference, but most organisations don’t have that scale, so small incremental improvements just don’t move the needle.”

The result is a graveyard of proofs of concept: technically functional, strategically inert.

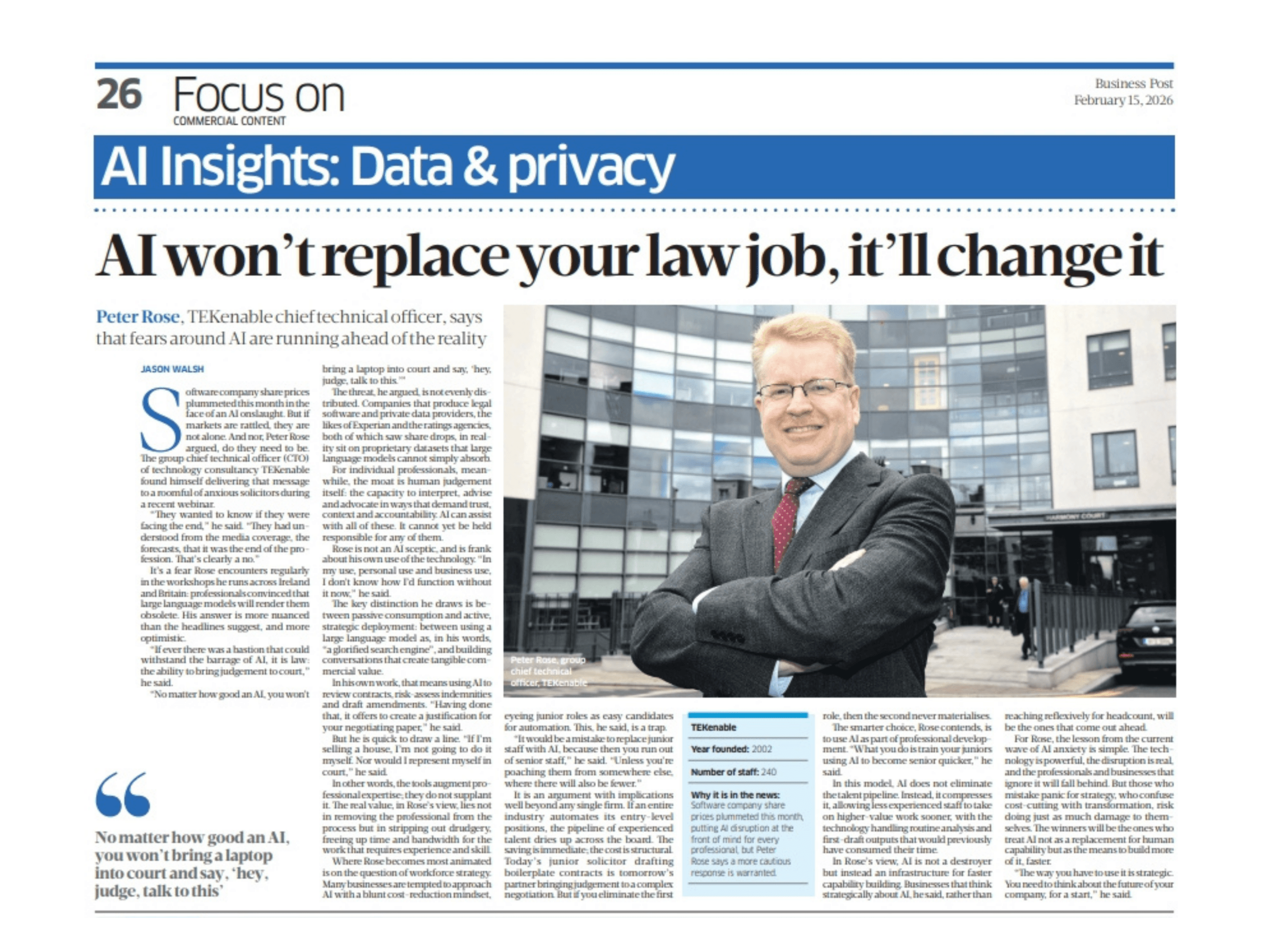

Rose is equally direct about a second failure mode: the fear of AI making a mistake. It is, he argues, a fear that is rarely applied consistently.

“The idea that AI is a technology, and that technology has to be perfect or near perfect, leads to process designs where an AI making a mistake is seen as a terrible failure,” he said. “A completely different process would have been designed for a human in the same role.”

The expectation, in other words, has to shift: we need to understand AI will not behave like a traditional computer system. Instead, it will behave more like a person, which is to say, capable, occasionally wrong, and in need of processes designed around that reality rather than against it.

The organisations getting this right, Rose said, are those that have inverted the usual logic entirely. Rather than acquiring AI capability and then casting around for somewhere to deploy it, they begin with a specific, meaningful problem and work from there.

“What everybody needs is to actually find out what the problem is, and then work out how to solve it.”

The same principle applies to data. He recalls an era when the instinct was to build vast data warehouses and fill them with as much information as possible, on the assumption that insight would eventually emerge. Sometimes it did. Often it did not.

“The key today,” Rose said, “is to identify what insight you are seeking, what that would enable you to do that you cannot do today, and what the impact of that would be, and then gather only the data needed to support that single objective.”

It is a discipline that runs counter to the accumulation instinct, and it demands a clarity of purpose that many organisations find uncomfortable. But it is also what separates a genuine business case from an expensive experiment.

Rose points to the public sector as an example of what purposeful AI adoption can look like. At its best, he said, public sector AI addresses a substantial, meaningful problem, as opposed to some mere efficiency or technology demonstration dressed up as strategy.

Seen this way, the contrast with the proof-of-concept treadmill is stark. Nevertheless, the challenge is particularly acute for mid-sized organisations; These businesses find themselves caught between the high risk tolerance of startups, which will build fast and iterate in public, and the scale of enterprises, for whom even small efficiency gains aggregate into real value.

This middle ground bears the heaviest compliance and risk burden while lacking the headroom to absorb it. Incremental improvements, for them, are rarely enough.

The issues that the midground has to work, has to think about, risk and compliance, but it lacks the scale so small incremental improvements aren’t enough. It just doesn’t move the needle for them, Rose said.

Despite this, he said, AI’s capabilities have advanced considerably, to the point where AI tools can be used to interrogate the output of another AI, something that can assist with oversight.

Nevertheless, with AI applications in business and the public sector, the technology is no longer the limiting factor, said Rose.

“What everybody needs is to actually find out what the problem is, and then work out how to solve it.”