AI compliance must go beyond box-ticking

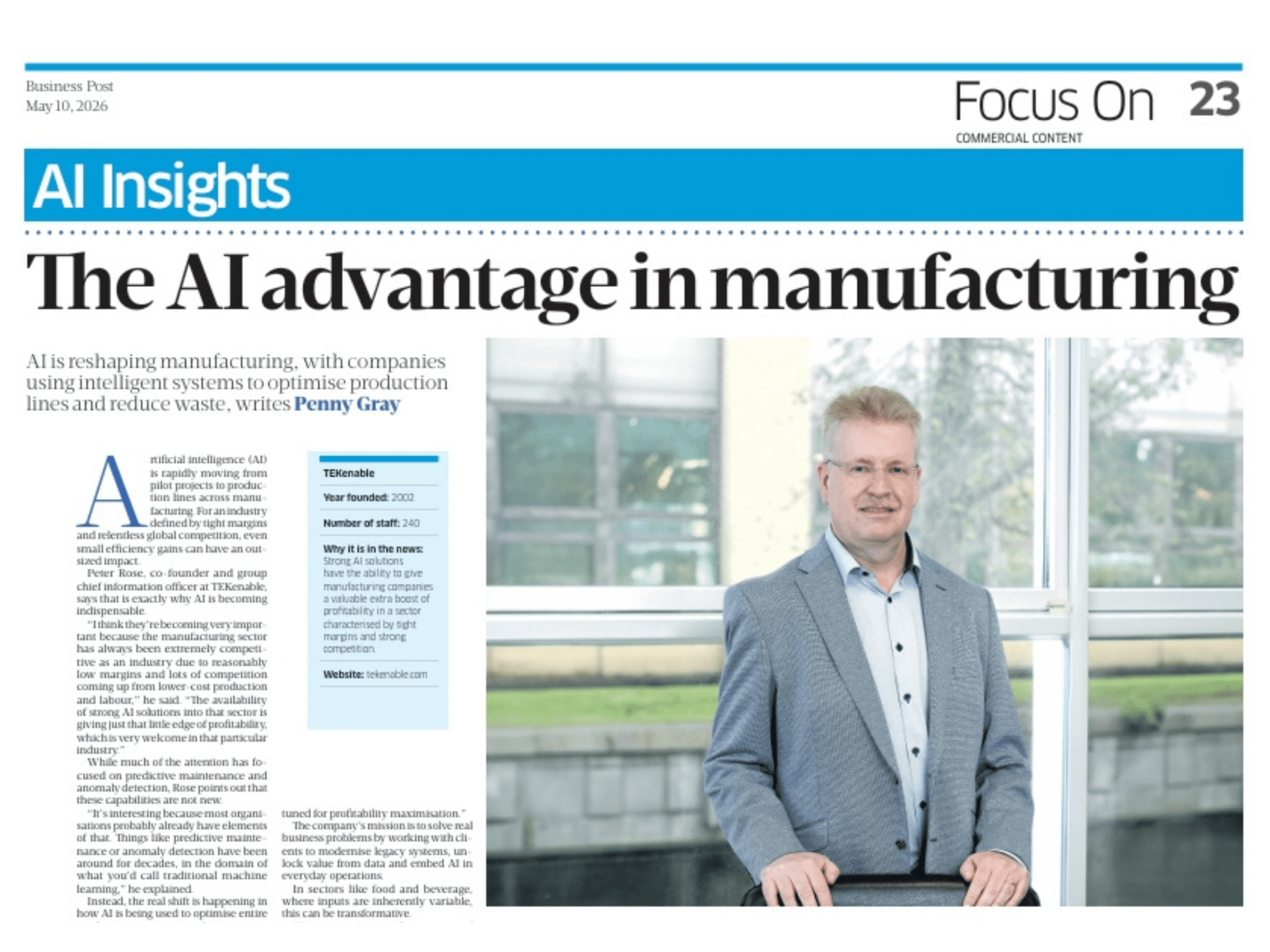

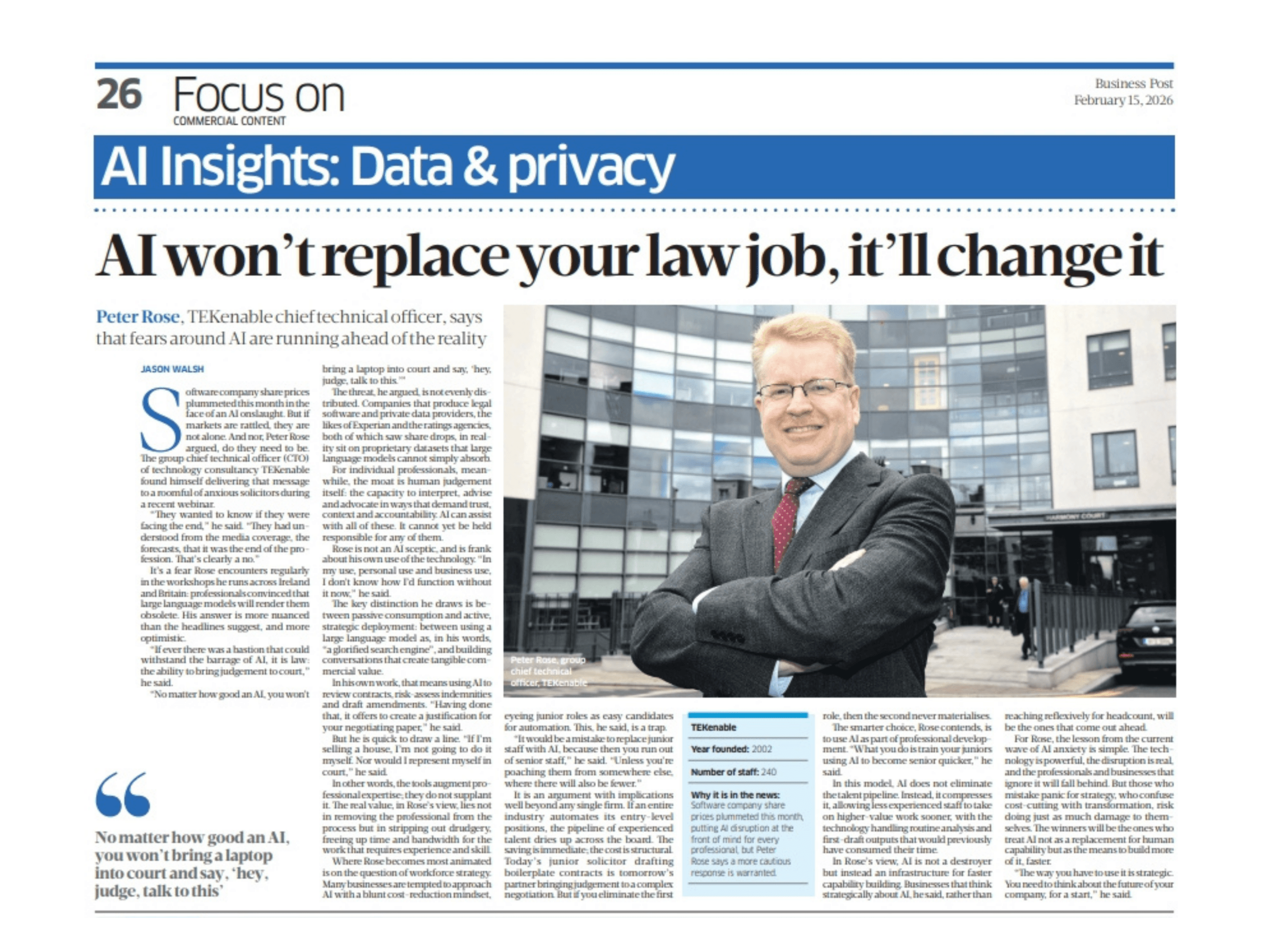

– Peter Rose, Group Chief Technical Director, TEKenable

Artificial intelligence (AI) and machine learning (ML) are already having an impact on a wide range of industries, but their success depends on a disciplined, thoughtful approach to compliance and risk management.

Indeed, while the introduction of machine intelligence has proved exciting for businesses and governments everywhere, fears of it skewing data through hallucinations or even discriminating against individuals and groups have led to rafts of new legislation that demands compliance.

Fundamentally, organisations that want to enjoy the benefits of AI, whether that is simple efficiency or opening up new business opportunities based on data, need to manage the risk inherent in creating, publishing and the ongoing application of machine learning models, said Peter Rose, group chief technical director at software developer TEKenable, which has deployed AI tools for a range of clients.

The first thing to do, he said, is to understand AI – and that means getting beyond prompting or even coding and taking a wide-angle view of the technology and how you plan to use it.

For instance, creating and publishing are distinct things in AI. “Creating is the training process, whereas publishing is putting it into production,” Rose said.

It sounds simple but while AI represents a step-change in software, it still requires the same intensity of focus, not least because ensuring compliance demands meticulous documentation.

This is particularly important in the context of the EU Artificial Intelligence Act (EU AI Act), the new Europe-wide regulation that demands AI be subject to proper management, data quality assurance, transparency and human oversight.

“People are well used to thinking about software life cycles and iterations, but some think the same discipline doesn’t need to be applied to AI models,” said Rose.

“In fact, you still need that same engineering discipline: everything has to be version controlled, tested and kept, to allow for reversion, and published.”

At TEKenable, AI tools are deployed in the cloud. This includes following best practices in data-ops, ML-ops, and AI-ops, and version-controlling training data to ensure it remains bias-free.

“The concept of Jupyter notebooks for example, which are a combination of code and documentation all in one, is great for testing and analysis of a dataset to prove there is no bias in a dataset,” said Rose.

However, that compliance is not just about the technical aspects of AI development, training and deployment, and that use of technology is governed by very clear chains of responsibility – and legal liability.

It is crucial to ensure that AI is not discriminating against people, that it’s not causing people to have a problem in their lives

“The creator of the model is legally liable for its actions under the EU AI act, this has been made very clear,” he said.

More than that, however, organisations that use AI need to be aware of the possibility that poor results will drive business away.

“There is also consequential liability and the potential for reputational damage.”

Rose said that for this reason traceability is paramount: “If it is created as a service then the question is: who created it and who trained it.”

Ultimately, he said, it is important to consider the ethical implications. “It is crucial to ensure that AI is not discriminating against people, that it’s not causing people to have a problem in their lives.

“You’re not deploying the software, you’re deploying a model that has been trained on data,” he said.

However, models also need to be updated to ensure they meet business goals. “There is also the need to retrain models. If your data was predominantly the holiday preferences of 22 to 30-year-olds and you have a big marketing campaign targeting the over 50s, does your model have the capacity to recommend the right holidays?”

If this all sounds rather complex, the other side of the equation is that by going beyond the low-hanging fruit organisations will experience the true benefits of AI.

“Organisations may become aware of what it can do and then become frustrated with the more generic products,” he said.

Businesses that do invest, he said, are finding that targeted AI interventions are having a real impact.

“I know of an insurance company that has increased its sales through outbound call centres 16 per cent by looking at the leads coming in and working from that. They literally put in one AI model, which they trained on their own data, and which ranks the leads by likelihood of sale.”